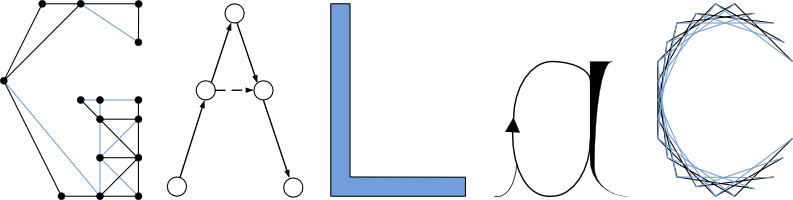

Complexity of neural network training and complexity proofs bypassing frontier issues

Time: 14:00 -- Location: LRI, 445

summary: We study the complexity of the neural network training decision problem in two different contexts.

First, in the general context, this problem has been shown to be in extensions of the class ∃R. We have

been able to show that whenever the activation functions are Lipschitz functions and the error function

is the norm 2, the decision problem can be solved in polynomial time with oracle in NP if we allow a "grey"

zone of small radius around the frontier of the problem. We have also been able to use such frontier of

difficult problem in the case of 3-coloring, and built an algorithm which is constant time in expectation.

Second, if the activation function is ReLU, the error function is an usual norm, and with only one neuron,

we have been able to show that the training decision problem is NP-complete.

In this talk, we will put a focus on the representation of certificates of such problems, which, in both

positive results around neural networks, consists in finding a rational certificate of polynomial size.